We have already explored some of the tools and apps like Power Automate, Power Apps, Power Virtual Agents, the Common Data Model, and the Common Data Service in previous articles. So what’s next? The next step, our dear reader, would be the discovery of application lifecycle management with power platform components with Finance and Operations apps.

So from an application lifecycle management standpoint, it’s essential to note that we need to manage the LM for our Power Platform components, much like we would for F&O. But there are clear differences in how the application lifecycle works for the Power Platform.

Environments Overview

Let’s talk about environments first. Environments are containers that administrators can use to manage apps, flows, connections, and other assets. It’s up to you when you create an environment, whether or not you want to add a database to that environment. And, if you choose to add a database, that becomes your common data service database. Otherwise, you can create an environment without a database – if you don’t need a mechanism to store data and you just building some Power Apps and flows, for instance.

You can think of an environment as a scope for the lifecycle of your project – dev, test, prod, and scope for permissions, whereas you may create separate environments for different purposes. For instance, if you are building a sales app, that particular environment has only access to salespeople and the data that’s in there is only specific to the data that’s required for the sales app. As for another example, if you are building a portal, you might make a separate environment that’s specific to that portal.

You should carefully consider how many environments to make. Much like with F&O environments, you can select a region where you want the environment to be deployed. Many different environments for different purposes can be created. CDS environment does come with your Finance & Operations implementation, but if you are going to deploy additional common data service database environments, it may require additional licensing.

Here are some of the key facts and things to know about environments:

- Again, environments are tied to a geographic location that’s configured at the time when you actually set up and create that environment. There isn’t an ability to move an environment to another geolocation or another Azure Data Center. Hence, if you need to move it to a different location, you will have to deploy a new one and use different LM tools to copy the database and move everything from one environment to another;

- Environments can also be used to target different audiences for different purposes;

- Every tenant comes with a default environment where all licensed Power Apps and Power Automate users are allowed to create apps and flows. This is the environment that’s used for citizen developer scenarios;

- The non-default environments have greater control offering more control around permissions. Furthermore, creation can also be restricted to only global and service admins from the Power Platform admin center.

Environment Strategy

When you are thinking about your environments, you’ll also want to define an environment strategy for the deployment of your CDS environments. Much like you need to have an environmental strategy for your F&O, you’ll need to have a strategy for your CDS, which includes not just how many and what types of environments you have, but also how each environment will be connected to other application. Hence, you want to think about creating a diagram to indicate what those linkages are.

It does make sense to assign your admins the Power Platform service admin role to grant them full access to Power Apps, Power Automate and Power BI. Also, consider restricting the creation of the net, new trial, and production environment to those admins – you don’t want anyone going out there and creating new CDS environments anytime they like. Especially because there’s cost associated with those environments.

We also recommend that you treat the default environment as a personal productivity environment for your business groups. And we do suggest renaming the environment giving it the name “personal productivity”. It’s obviously not required but will make the environment more obvious like what that environment is for.

Another suggestion would be establishing a process for requesting access or the creation of new environments. You might need to create a new environment for specific groups or applications, and you might have individual use environments that are for proofs of concepts or training that you might be conducting in your organization.

Solutions

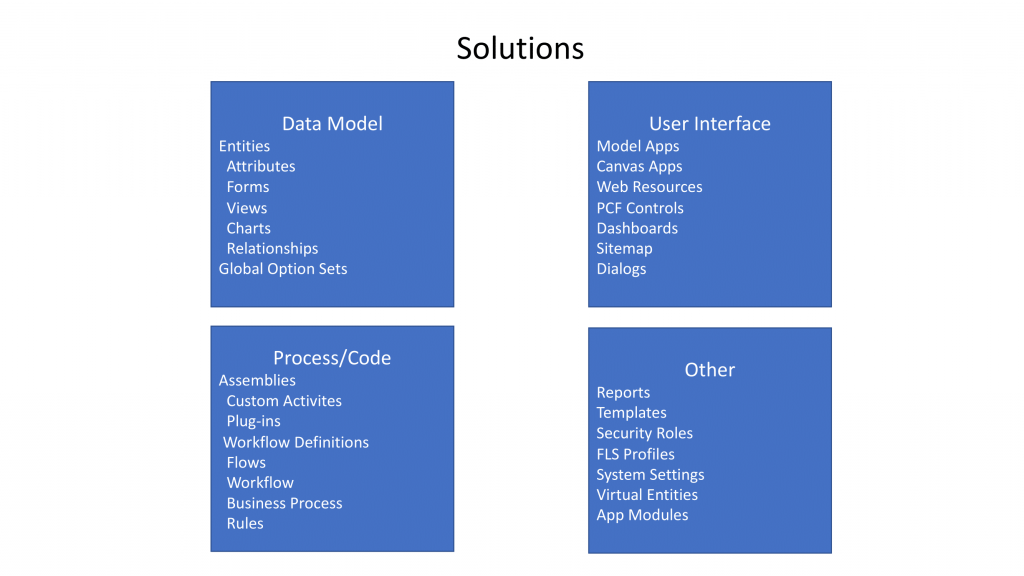

Solutions are used to packaging maintained components that make up one or more Power Apps, Power Automate Flow, and Power Virtual Agents. This includes things like portals and UI flows as well as AI builder projects that you create.

Solutions are created and authored by a publisher. So when we think about all the components that go inside of a solution, they are as follow:

- Data Model

- User Interface

- Process/Code

- Other (Reports, Templates, etc.)

There are two types of solutions, which are managed and unmanaged. Unmanaged solutions are used in development environments while you are making configuration changes to your application. SOlutions are exported as an unmanaged solution and then checked into your source control system. Unmanaged solutions should be considered as your source.

Managed solutions, on the other hand, are used to deploy to any environment outside of your development environment. They should be generated by a build server and considered as a build artifact.

Solutions: DO’s and DON’Ts

It’s important to know that solutions require a publisher whereas the same publisher can be associated with multiple solutions. There are two default publishers included – CDS default publisher and the default publisher for your organization ID. So It’s strongly recommended to create your own publisher for all of your solutions – don’t use default publishers!

When you import your assets through managed solution, the publisher is who owns those assets. Hence, you should only include the assets and sub-assets that have been changed or modified, but not the entire entity.

Then, if you create a brand new entity from scratch, you would want to include the entire entity. But if you have only changed, let’s say, a form – we only recommend including such subcomponents or sub-assets that you have actually modified. This will eventually reduce the solution import time, size, and complexity of the solution you are importing. It will also reduce the code base stored in your source control and will help reduce collisions when you’ve got multiple developers on assets of a component, such as an entity.

Power Platform Build Tools for Azure DevOps

Let’s also talk a little bit about the Power Platform build tools for Azure DevOps for pro dev experiences.

You can use the tools to automate common build and deployment tasks related to Power Apps, Power Automate, and Power Virtual Agents. This includes tasks like:

- provisioning and de-provisioning of environments;

- synchronization of solutions metadata, so when you want to move something between development, source control, or another environment;

- Build artifact generation;

- Deployment to downstream environments;

- Performing static analysis checks against your solution by using Power Apps Checking Service.

The Basic Process

It’s important to note that when you use Finance & Operations, you can put your F&O builds and the steps together into the same build as your Power Apps, Power Automate, or common data service build objects as well.

ALM Powered By Azure DevOps

Taking a closer look at the process flow of the application lifecycle management when we use Power Platform build tools, we will see that it’s separated into three phases:

- The initial build pipeline is what initiates a development environment, typically daily;

- Then, the build pipeline is automated to get rid of manual steps. You are still going to need to run a test unit manually, but there’s no more need to manually upload to solution checker, manually export, unpack, and push.

- With the automated release pipeline, you are able to remove some manual steps from the process, to publish weekly/daily/hourly, for instance.